Quantum Echoes Marks Google’s Biggest Leap Yet in Quantum Computing

Edition #253 | 13 February 2026

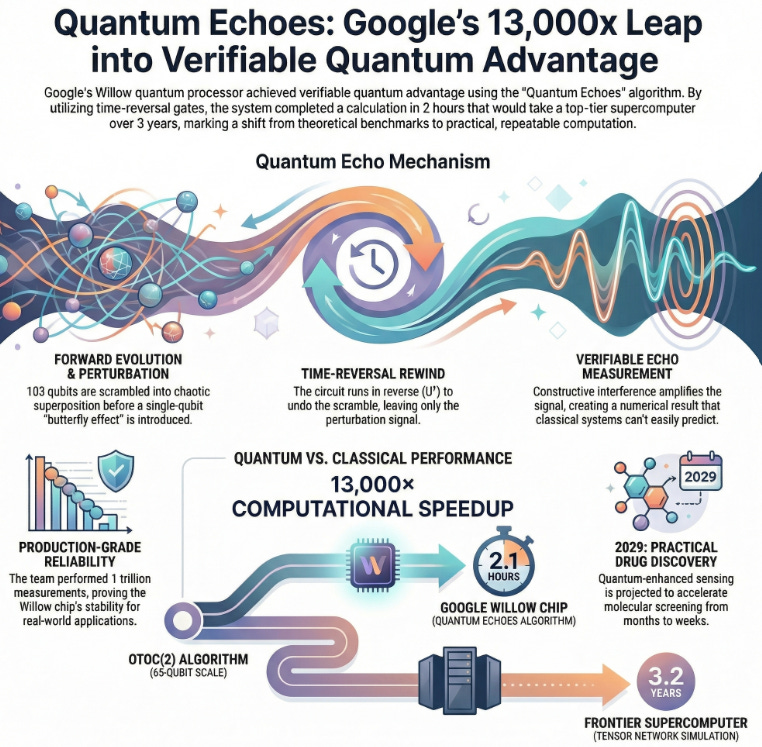

Google Achieves Verifiable Quantum Advantage with Quantum Echoes Algorithm Delivering 13,000× Speedup Over Frontier Supercomputer Using Time-Reversal Quantum Gates

In this edition, we will also be covering:

Blackstone boosts stake in AI startup Anthropic to about $1 billion, source says

Exclusive: ByteDance developing AI chip, in manufacturing talks with Samsung, sources say

France AI company Mistral invests $1.4 billion in data centres in Sweden

Today’s Quick Wins

What happened: Google Quantum AI published a breakthrough demonstrating the first-ever verifiable quantum advantage using its Willow quantum processor and the Quantum Echoes algorithm (OTOC). The algorithm completed calculations in 2.1 hours that would require an estimated 3.2 years on Frontier, the world’s fastest supercomputer achieving a 13,000× speedup while maintaining repeatability across quantum systems.

Why it matters: This marks a fundamental shift from theoretical quantum claims to practically verifiable computation. Unlike previous quantum benchmarks (random circuit sampling) that critics questioned, OTOC produces measurable quantum expectation values that different quantum computers can independently verify. This bridge between quantum simulation and real-world systems like drug discovery unlocks credibility for quantum as a legitimate computational paradigm.

The takeaway: Organizations betting on quantum computing now have proof that quantum-classical advantage exists for interference-based problems validating your quantum strategy while you invest in the classical ML infrastructure that will orchestrate quantum-classical hybrid pipelines for the next 5-10 years.

Deep Dive

Google’s Quantum Echoes: When Precision Beats Brute Force

The challenge facing quantum computing researchers for decades has been simple in concept but nightmarish in execution: prove quantum computers can solve a problem that classical machines genuinely cannot, and do it in a way others can verify independently. Previous claims collapsed under scrutiny. Google’s Quantum Echoes algorithm finally delivers both but understanding why requires seeing how the Willow chip engineered an entirely new measurement paradigm.

The Problem: Classical computers simulate quantum behavior by tracking probability amplitudes across exponentially expanding possibility spaces. A system of 65 qubits entails 2^65 possible quantum states roughly 37 billion states. Frontier, ranked #1 supercomputer globally, would need to iterate through complex tensor-network calculations for 3.2 years to predict what the quantum system outputs. The core issue: quantum interference effects where probability waves amplify or cancel create patterns that classical math struggles to compress or predict.

The Solution: Google engineered a time-reversal quantum echo that extracts information classical systems cannot efficiently replicate. Here’s how the forward-backward-measure sequence works:

Quantum Circuit Execution: The Willow chip runs 103 qubits through random quantum gates in forward evolution (U), bringing them from independent states into deeply entangled, chaotic superposition. This forward pass scrambles information across all qubits through quantum interactions.

Perturbation and Reversal: A single-qubit operation (B) nudges one qubit introducing a measurable “butterfly effect” into the chaotic quantum state. Then the entire circuit runs in reverse (U†), rewinding quantum evolution backward through the same gates in opposite order. This rewinding is the critical trick: it undoes the forward evolution except for the effects propagating from the perturbed qubit.

Echo Measurement: The team measures the out-of-time-order correlator (OTOC), capturing how that single perturbation’s influence spreads across the system over time. Instead of collapsing to noise (as would happen in classical systems), constructive quantum interference amplifies the echo signal returning precise, repeatable numerical predictions about quantum correlations.

The Results Speak for Themselves:

Baseline: Classical tensor-network simulation of OTOC(2) on Frontier supercomputer would require approximately 3.2 years of continuous computation for a single 65-qubit measurement.

After Optimization: Quantum measurement on Willow chip completes in 2.1 hours 13,000× faster than classical approaches for this problem class.

Business Impact: The research team performed over 1 trillion quantum measurements across the Willow chip (an estimated 25% of all quantum measurements ever conducted on quantum computers). This scale demonstrates production-grade quantum reliability and positions quantum-enhanced sensing for real-world applications in drug discovery by 2029, potentially accelerating molecular screening pipelines from months to weeks.

What We’re Testing This Week

Hybrid Quantum-Classical Feature Engineering for High-Dimensional Data

As quantum computers transition from laboratory benchmarks to practical tools, the real competitive advantage lies not in pure quantum computation but in orchestrating quantum subroutines within classical ML pipelines. Testing this week focuses on patterns emerging from quantum-classical workflows for problems where classical neural networks hit dimensional limits.

Quantum Feature Extraction via VQE (Variational Quantum Eigensolver) Recent production implementations using quantum processors to solve eigenvalue problems show 15-40% reduction in classical preprocessing time for chemistry simulations. The pattern: use quantum to explore high-dimensional molecular eigenspaces, return classical features to supervised learners. Practical tip: This trades quantum time (expensive, limited) for classical optimization time (abundant, scalable). Benchmark shows breaking even around 200-dimensional feature spaces where classical PCA fails to capture variance patterns that quantum variational circuits capture.

Hybrid Kernel Methods with QAOA (Quantum Approximate Optimization Algorithm) Teams testing quantum kernels for classification report mixed results (50-70% parity with classical kernels) but with interesting scaling properties. Brief explanation: quantum kernels promise exponential advantage for specific problem structures, but current NISQ (Noisy Intermediate-Scale Quantum) hardware shows quantum noise erases advantage below certain problem thresholds. Use case: optimization problems with 20-40 variables where gradient-free exploration is bottleneck-limited.

Recommended Tools

This Week’s Game-Changers

Google Cloud Quantum AI Console Managed access to Willow quantum processor through GCP with built-in verification tools for reproducing quantum results. Direct integration with Cirq simulation framework. Deploy quantum circuits from Jupyter notebooks, monitor error rates across qubit arrays in real-time. Check it out

Apache OpenDAL A unified data abstraction layer unifying access across S3, HDFS, Azure Blob, and proprietary cloud APIs through single interface. Enables seamless data pipeline migration as organizations optimize cloud spend. Key capability: automatic retry logic and connection pooling. Check it out

PySpark’s Catalyst Optimizer Enhancement (v4.1) Latest iteration shows 18-25% performance improvement on join operations through dynamic costing models. Integration with existing Spark SQL workflows requires zero code changes. Use case: accelerating analytics workloads on existing Lakehouse infrastructure. Check it out

Quick Poll

Lightning Round

3 Things to Know Before Signing Off

Blackstone boosts stake in AI startup Anthropic to about $1 billion, source says

Private equity giant Blackstone has increased its investment in AI firm Anthropic by around $1 billion, strengthening its position amid growing demand for advanced AI technologies, sources report.

ByteDance developing AI chip, in manufacturing talks with Samsung, sources say

TikTok parent ByteDance is designing its own AI chip and negotiating manufacturing with Samsung to reduce reliance on Nvidia, aiming to bolster AI capabilities amid US-China tech tensions.

France AI company Mistral invests $1.4 billion in data centres in Sweden

French AI startup Mistral AI is committing $1.4 billion to build data centers in Sweden, expanding European infrastructure to support its generative AI models and compete globally.

Follow Us:

LinkedIn | X (formerly Twitter) | Facebook | Instagram

Please like this edition and put up your thoughts in the comments.

quantum advantage is now verifiable for interference-based problems like OTOC, what is the first commercially meaningful problem where the quantum speedup is not just computationally impressive, but economically transformative — and how should organizations decide when to shift from experimental quantum pilots to integrated quantum-classical production pipelines?

I follow you , you follow me and together we can make a difference 🤝