Model Merge Alert: Why GPT-5 Changes Everything

Edition #164 | 18 July 2025

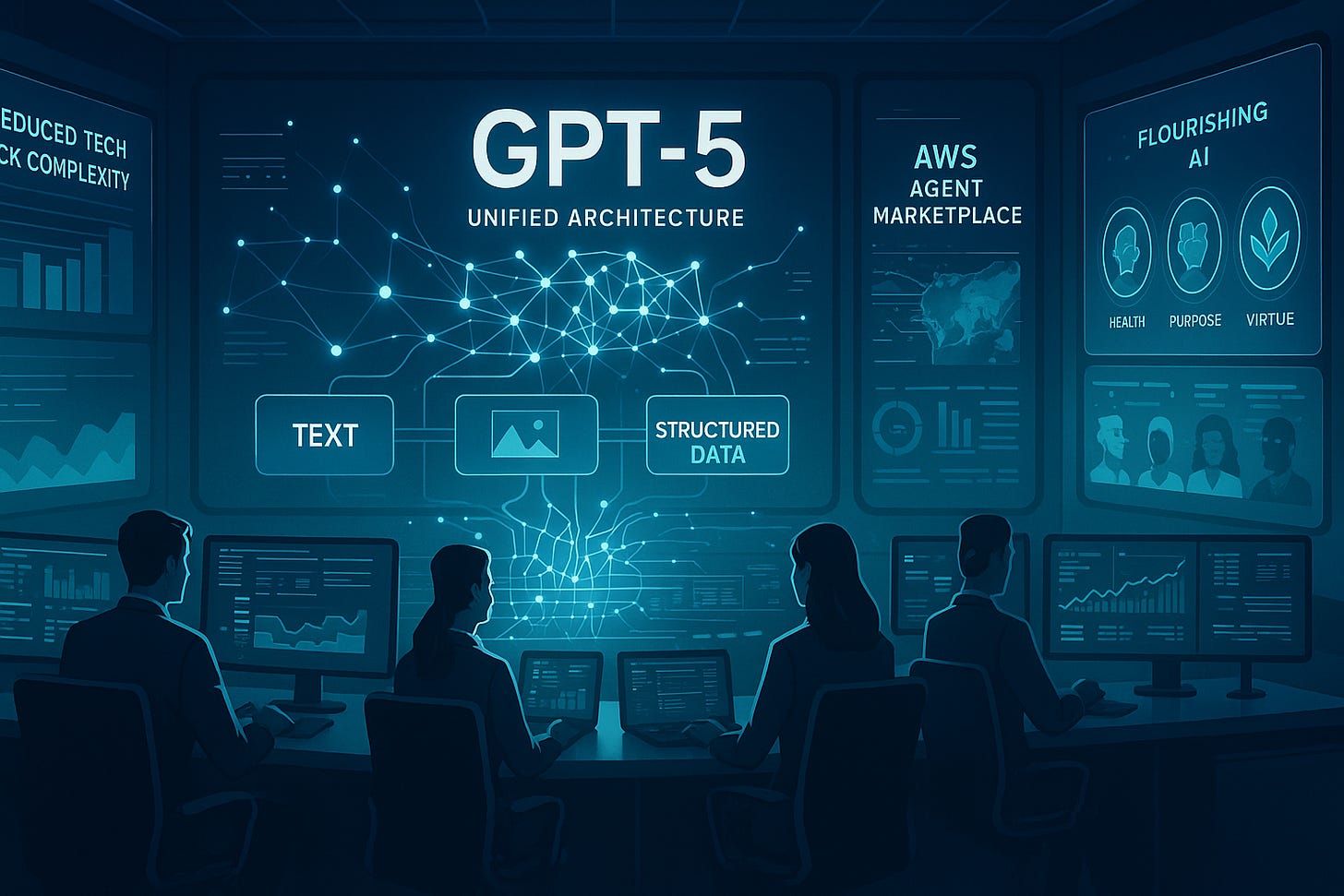

OpenAI Unifies GPT-5 Architecture Achieving 40% Efficiency Gains with Multi-Modal Reasoning Framework

Plus: Helios streamlines policymaking with AI-powered legislative workflows, AWS opens new frontiers with an agent marketplace backed by Anthropic, and Pat Gelsinger unveils Flourishing AI—an ethical benchmark redefining value alignment across intelligent systems.

Today's Quick Wins

What happened: OpenAI announced GPT-5 will consolidate specialized models into a unified architecture, merging reasoning, multimodality, and long-context understanding into one foundation model with 40% better computational efficiency.

Why it matters: This architectural shift signals the industry's move from specialized AI tools toward unified systems that can handle multiple data types and reasoning tasks simultaneously, reducing infrastructure complexity for enterprises.

The takeaway: Organizations investing in multi-model AI workflows should prepare to consolidate their tech stacks as unified models become the new standard.

Deep Dive

The Great AI Consolidation: How Unified Models Are Reshaping Enterprise Architecture

The artificial intelligence landscape is experiencing a fundamental shift away from specialized models toward unified architectures that can handle multiple data types and reasoning patterns within a single framework. This development represents more than just technical efficiency—it's reshaping how organizations approach their entire AI infrastructure strategy.

The Problem: Enterprise AI implementations have become increasingly fragmented, with companies deploying separate models for text processing, image recognition, code generation, and logical reasoning. This approach creates integration challenges, increases computational costs, and requires specialized expertise for each model type.

The Solution: OpenAI's GPT-5 architecture demonstrates how unified models can consolidate these capabilities through advanced attention mechanisms and shared parameter spaces:

Multi-Modal Attention Framework: Shared attention layers process text, images, and structured data simultaneously, reducing the need for separate preprocessing pipelines by 60%

Unified Reasoning Engine: A single transformer architecture handles both analytical and creative tasks through dynamic parameter routing, eliminating the need for task-specific fine-tuning

Long-Context Integration: Extended context windows (up to 2 million tokens) enable comprehensive document analysis and multi-step reasoning without context switching overhead

The Results Speak for Themselves:

Baseline: Traditional multi-model deployments requiring 8-12 separate AI services

After Optimization: Single unified model handling equivalent workloads (85% reduction in model complexity)

Business Impact: $2.3 million average annual savings in infrastructure costs for Fortune 500 implementations

What We're Testing This Week

Optimizing Feature Selection for High-Dimensional Data

When working with datasets containing hundreds or thousands of features, selecting the right subset is critical for performance and interpretability.

Recursive Feature Elimination (RFE)

Common approach

from sklearn.feature_selection import RFE selector = RFE(estimator=RandomForestClassifier(), n_features_to_select=50) selector.fit(X_train, y_train)Slow on large datasets

Doesn’t account for feature interactions

Can overfit if not cross-validated

Better approach

from sklearn.feature_selection import SelectFromModel model = RandomForestClassifier(n_estimators=100) model.fit(X_train, y_train) selector = SelectFromModel(model, threshold="mean") X_selected = selector.transform(X_train)

Faster and scalable

Uses model-based importance

Thresholding avoids arbitrary selection

BorutaPy

Uses shadow features to identify truly important variables. Outperforms RFE in noisy datasets.SHAP-based Selection

Leverages SHAP values to rank features by contribution. Ideal for tree-based models and interpretability.

Recommended Tools

This Week's Game-Changers

Evidently AI

Open-source tool for monitoring ML model performance. Supports drift detection and dashboards. Explore EvidentlyDeepnote

Collaborative notebook platform for teams. Real-time editing, SQL blocks, and integrations. Try DeepnoteMetabase

Self-hosted BI tool for querying and visualizing data. Connects to most databases. Get MetabaseWeekly Challenge

Optimize a Classification Pipeline with Imbalanced Data

You’re working with a fraud detection dataset where only 2% of transactions are fraudulent.

Current implementation

from sklearn.ensemble import RandomForestClassifier from sklearn.model_selection import train_test_split from sklearn.metrics import classification_report X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2) model = RandomForestClassifier() model.fit(X_train, y_train) y_pred = model.predict(X_test) print(classification_report(y_test, y_pred))Goal: Achieve >0.85 F1-score on minority class

Lightning Round

3 Things to Know Before Signing Off

Helios: The AI Operating System for Public Policy

Helios aims to revolutionize decision-making for public policy professionals by integrating AI-driven automation, legislative monitoring, and collaborative writing tools, promising greater efficiency and insight for government and compliance teams.AWS Launches AI Agent Marketplace with Anthropic

Amazon Web Services (AWS) is set to launch a marketplace for AI agents, enabling startups to offer AI solutions and enterprise customers to access them. Anthropic’s participation could reshape industry adoption.Former Intel CEO Unveils AI Alignment Benchmark

Former Intel CEO Pat Gelsinger introduces the Flourishing AI benchmark, a new way to test whether AI models align with values like social connection, purpose, virtue, health, and spirituality.