China’s Billion-Dollar GPU Scramble

Edition #265 | 13 March 2026

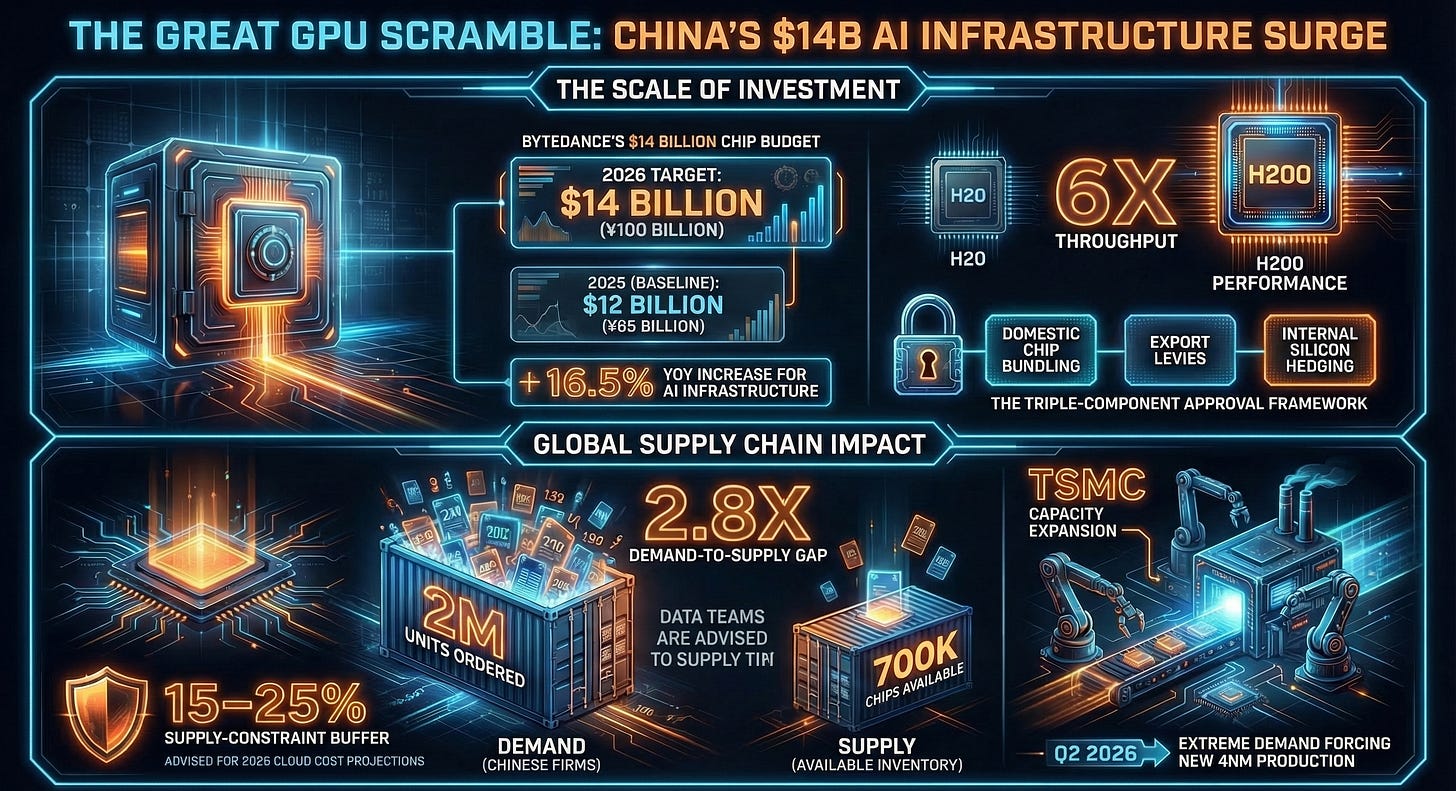

ByteDance Secures Access to 400,000+ Nvidia H200 GPUs in China’s Largest AI Chip Approval — $14B Commitment Follows

In this edition, we will also be covering:

SoftBank Seeks Record Loan of Up to $40 Billion for OpenAI Stake

Anthropic Unveils Amazon-Inspired Marketplace for AI Software

OpenAI Releases AI Agent Security Tool for Research Preview

Today’s Quick Wins

What happened: China’s government has officially approved ByteDance, Alibaba, and Tencent to collectively purchase more than 400,000 Nvidia H200 GPUs — the highest-tier chip currently permitted under U.S. export rules. ByteDance alone has budgeted $14 billion (¥100 billion) for Nvidia chip procurement in 2026, up from roughly $12 billion in 2025, as demand across its TikTok, Douyin, Volcano Engine cloud platform, and Doubao LLM continues to surge well beyond available GPU supply.

Why it matters: Chinese tech firms have already placed orders for more than 2 million H200 units for 2026 — nearly three times Nvidia’s current inventory of ~700,000 chips. This supply-demand gap is forcing Nvidia to approach TSMC for additional 4nm production capacity (new orders opening Q2 2026), fundamentally reshaping the global AI chip supply chain at a scale that will affect data center pricing, training timelines, and inference costs for every team building on cloud infrastructure.

The takeaway: If you are running cost projections for large-scale model training or cloud GPU reservations through 2026, build in a 15–25% supply-constraint buffer — this approval signals that demand for top-tier compute just got structurally more competitive worldwide.

Deep Dive

The Great GPU Scramble: What ByteDance’s $14B Nvidia Deal Means for Every Data Team

We are entering a period where access to high-end compute is no longer primarily a money problem — it is a geopolitics problem. Understanding that shift will change how smart data teams plan infrastructure.

For context, the H200 GPU is not just an incremental upgrade. It delivers roughly six times the throughput of Nvidia’s H20, the China-tailored chip that ByteDance and peers had been relying on before this approval. When Beijing officially greenlit purchases for ByteDance, Alibaba, and Tencent this week — reportedly during Nvidia CEO Jensen Huang’s in-person visit to China — it marked the culmination of a months-long negotiation involving U.S. export rules, Beijing’s domestic semiconductor quotas, and each company’s AI capacity roadmap.

The Problem: ByteDance operates Doubao, currently the most popular AI chatbot in China, alongside TikTok and Douyin’s recommendation engines — both of which are, at their core, massive real-time inference systems. Training updates at that scale require H200-class GPUs. The H20 simply wasn’t enough. ByteDance had been renting overseas data center capacity (classifying those costs as operating expenses rather than capex) to legally access Nvidia’s more powerful chips. That workaround is expensive and introduces latency. The domestic H200 approval changes the calculus entirely.

The Solution: The approval structure Beijing put in place has three interlocking components worth understanding as a data professional, because versions of this framework are appearing in enterprise AI procurement globally.

Conditional import licenses: ByteDance, Alibaba, and Tencent each received purchase approvals tied to conditions still being finalized by China’s National Development and Reform Commission. One reported condition involves bundling H200 imports with purchases of domestically-produced chips — a requirement that functions like a tax on foreign compute. This is why one source noted that some firms have not yet converted their approvals into actual purchase orders.

A 25% export levy: On the U.S. side, the Trump administration’s deal with Nvidia requires the company to remit 25% of Chinese H200 revenue to the government. At $27,000 per chip (the reported unit price), that adds roughly $6,750 per GPU to Nvidia’s effective cost structure — a number that will eventually surface in cloud pricing.

ByteDance’s internal hedge: Even while placing massive external chip orders, ByteDance’s internal semiconductor division (approximately 1,000 engineers, now incorporated under the Singapore subsidiary Picoheart) has completed a tape-out of a custom processor that matches H20-level performance at lower cost. The strategy is classic platform thinking: buy external compute for training-heavy workloads where H200 performance is non-negotiable, deploy cheaper custom silicon for inference at scale.

The Results Speak for Themselves:

Baseline (2025): ByteDance spent approximately ¥85 billion (~$12B) on Nvidia chips under restricted H20-only access

After Approval (2026 target): ¥100 billion (~$14B) in planned Nvidia procurement — a 16.5% year-over-year increase, contingent on H200 supply and condition finalization

Business Impact: Chinese firms collectively have orders in for 2M+ H200 chips against Nvidia’s ~700K available inventory, compelling Nvidia to commission additional TSMC 4nm production capacity — a supply chain expansion whose costs will ripple into global cloud GPU pricing by Q3 2026

The signal here for data teams is not just “China gets chips now.” It is that the world’s largest AI infrastructure buyers — U.S. hyperscalers, Chinese tech giants, and Gulf sovereign wealth funds — are all competing for the same finite pool of cutting-edge silicon. That competition is structural, not temporary.

What We’re Testing This Week

GPU-Efficient Fine-Tuning: QLoRA vs. Full Fine-Tuning in a Compute-Constrained World

Given this week’s news, it is worth revisiting just how much you can get done without H200-class access. The answer, with the right techniques, is more than most teams realize.

The dominant approach in 2026 for teams without hyperscaler budgets is QLoRA (Quantized Low-Rank Adaptation), which combines 4-bit quantization of the base model weights with low-rank adapter training. Compared to full fine-tuning of a 7B parameter model, QLoRA reduces GPU memory requirements by roughly 75% — meaning a task that would require 4× A100 80GB GPUs can often run on a single consumer-grade 24GB card. On benchmark tasks like instruction following (measured on MT-Bench), QLoRA-tuned models typically land within 1–3% of full fine-tune quality, which is negligible for most production use cases.

The practical workflow looks like this: you load the base model in 4-bit NF4 precision using bitsandbytes, attach lightweight LoRA adapters (rank 16–64 is the sweet spot for most tasks), train only those adapter weights while the frozen base model remains compressed in memory, and then merge the adapters back at inference time. The entire process — for a 7B model on a single A100 — typically completes in 2–4 hours for a 10,000-example dataset.

The second technique gaining traction in benchmark-conscious teams is Flash Attention 2 + gradient checkpointing as a drop-in addition to any training loop. Flash Attention 2 restructures the attention computation to be IO-bound rather than memory-bound, reducing peak VRAM usage by 30–40% with zero accuracy cost. Paired with gradient checkpointing (which trades compute for memory by recomputing activations during the backward pass rather than storing them), you can push sequence lengths 2–3× longer on the same hardware. For teams working with long-context financial documents, legal text, or time-series event logs, this combination is often the difference between needing a new GPU and shipping with what you have.

💵 50% Off All Live Bootcamps and Courses

📬 Daily Business Briefings; All edition themes are different from the other.

📘 1 Free E-book Every Week

🎓 FREE Access to All Webinars & Masterclasses

📊 Exclusive Premium Content

Recommended Tools

This Week’s Game-Changers

Unsloth Fine-tune Llama 3, Mistral, and Phi-3 models at up to 2× the speed of standard HuggingFace training with 70% less VRAM usage, thanks to hand-written GPU kernels that replace standard attention and MLP operations. Supports QLoRA out of the box. Check it out

LM Eval Harness (EleutherAI) The industry-standard open-source evaluation framework used to benchmark every major open model — supports 60+ tasks including ARC, HellaSwag, MMLU, and GSM8K. Run it locally against any HuggingFace-compatible checkpoint to validate your fine-tuned model before deployment. Check it out

Weights & Biases (W&B) Weave W&B’s new LLM observability layer sits on top of your existing experiment tracking to log prompts, completions, token usage, and latency across every model call in your pipeline — no code restructuring required. Integrates directly with LangChain, LlamaIndex, and raw OpenAI/Anthropic SDK calls. Check it out

Quick Poll

Lightning Round

3 Things to Know Before Signing Off

SoftBank Seeks Record Loan of Up to $40 Billion for OpenAI Stake

SoftBank is pursuing a massive $40 billion loan to fund its expanded stake in OpenAI, potentially marking the largest corporate loan ever. This move underscores aggressive investment in AI amid competitive pressures.

Anthropic Unveils Amazon-Inspired Marketplace for AI Software

Anthropic launched an AI software marketplace modeled after Amazon, enabling developers to sell and distribute AI tools seamlessly. It aims to foster innovation and simplify AI adoption for businesses.

OpenAI Releases AI Agent Security Tool for Research Preview

OpenAI introduced a security tool for AI agents in research preview, enhancing protection against vulnerabilities. It targets developers building autonomous agents, prioritizing safety in advanced AI applications.

Follow Us:

LinkedIn | X (formerly Twitter) | Facebook | Instagram

Please like this edition and put up your thoughts in the comments.