Version Control for Data Projects

Edition #252 | 10 February 2026

Hello!

Welcome to today’s edition of Business Analytics Review!

Today we dive deeply into Version Control for Data Projects, staying purely in the realm of theory and principles, with a focused lens on Git implementation and workflow design. No code, no folder structures, no command examples just the conceptual architecture that makes reproducible, collaborative, and auditable machine learning possible in the first place.

Why Git Still Rules (Even When Data Is Involved) – A Purely Theoretical Exploration

At its heart, version control is about preserving history, enabling safe experimentation, and creating shared truth in environments where change is constant. Git was born for software code text files that are small, deterministic, and human-readable. Data projects, however, introduce entirely different realities: datasets that can be enormous and mutable, models whose performance depends on exact combinations of data, hyperparameters, and random seeds, and experiments that often branch in dozens of exploratory directions before any single path proves valuable.

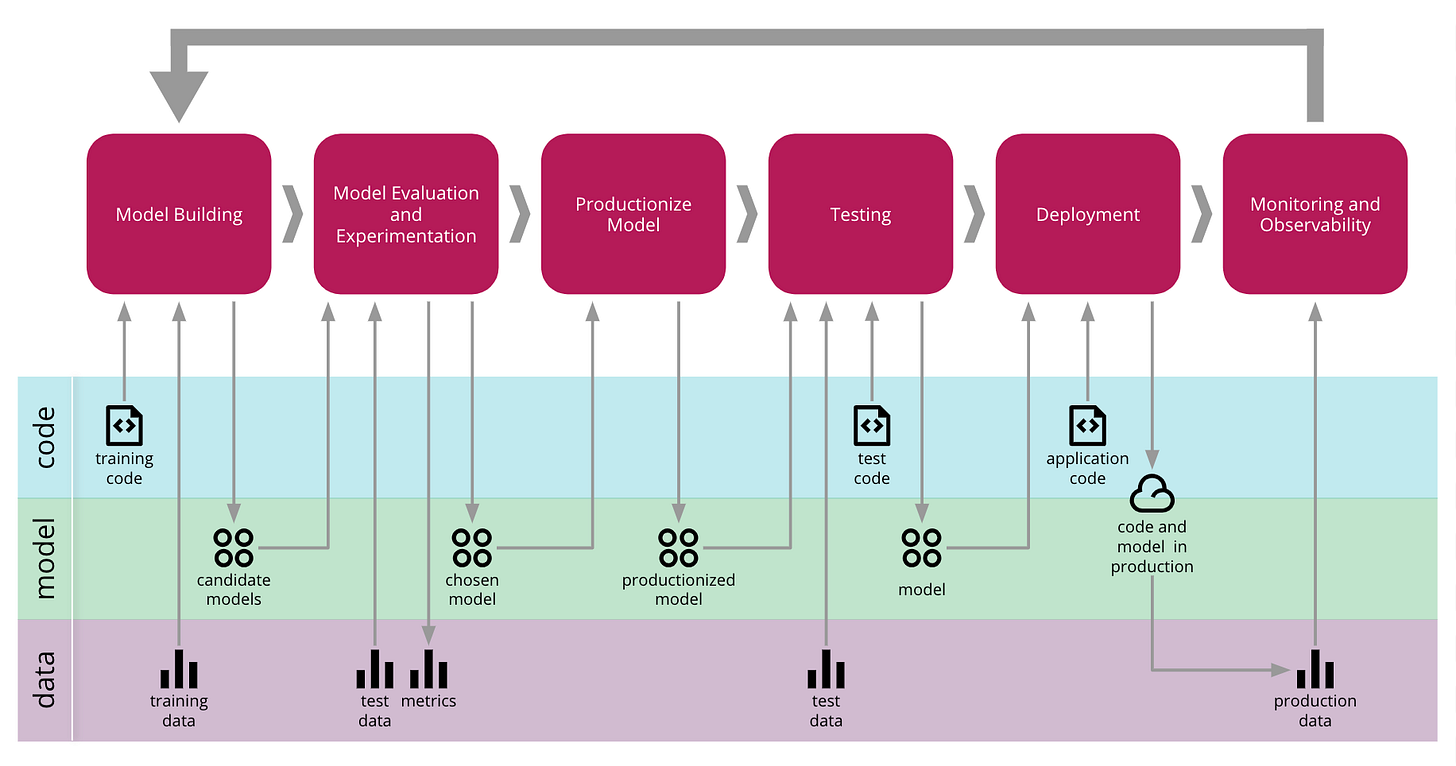

The fundamental challenge is this: in traditional software, the artifact (code) is the source of truth and is relatively small. In machine learning, the source of truth is a triad code, data, and the resulting model where data and models dwarf the code in size and complexity, and the relationship between them is non-linear and highly sensitive. Pure Git struggles here because it was never meant to track petabyte-scale immutable snapshots or to handle the fact that “changing one line of preprocessing can invalidate an entire lineage of models.”

Yet Git endures as the conceptual cornerstone because its core principles map elegantly to the needs of data projects when applied at the right level of abstraction:

Immutability and provenance: Every change creates a new, identifiable state that can never be silently overwritten. This is the bedrock of reproducibility being able to reconstruct the exact conditions that produced a given result.

Branching as intellectual isolation: Branches allow parallel exploration of ideas without contaminating the shared foundation. In data science, this mirrors the scientific method: hypothesize, test in isolation, then integrate only what proves valuable.

Merging as deliberate integration: Merges force explicit resolution of conflicts, turning potential chaos into documented decisions. In ML, this becomes a moment of reflection: which data transformation, which feature set, which training regime actually improves the system?

Distributed history and auditability: Every participant carries the full story. For regulated industries (finance, healthcare, autonomous systems), this distributed ledger of decisions becomes compliance gold proof of exactly which data, which code, and which transformations led to a deployed model.

Atomicity and rollback: Changes happen in indivisible units. When a model suddenly degrades in production, the ability to theoretically “travel back in time” to a known-good state is priceless.

The theoretical elegance lies in treating Git as the metadata and coordination layer. Code, configuration, pipeline definitions, and references (pointers) live under Git’s protection. The heavy artifacts raw data, processed datasets, trained weights live elsewhere but are referenced immutably, so the entire system can be reconstructed from the Git history alone.

This separation of concerns creates a powerful abstraction: Git becomes the “brain” that remembers why and when things changed, while the data layer handles the “body” of actual storage. The result is a system that respects both the exploratory, creative nature of data science and the engineering discipline required for production.

Common theoretical pitfalls teams encounter (and why they matter):

Treating data as mutable in the same way code is mutable → leads to silent drift and irreproducible results.

Linear workflows that suppress branching → stifle creativity and make it impossible to compare alternatives fairly.

Over-reliance on a single “main” branch without clear promotion rules → turns the canonical truth into a moving target.

Neglecting lineage and provenance → makes debugging model degradation an archaeological dig instead of a precise investigation.

When these principles are internalized, version control stops being a tooling tax and becomes a force multiplier: experiments become safer, collaboration becomes frictionless, and production incidents become traceable rather than mysterious.

The payoff is profound: teams move faster because they fear change less. They sleep better because they can always answer “what exactly produced this model?” And organizations gain trust both internally and with regulators because the entire decision history is preserved.

Trending in AI and Data Science

Let’s catch up on some of the latest happenings in the world of AI and Data Science

Roblox launches AI tech that generates functioning models with natural language

Roblox has unveiled an AI‑powered “4D creation” tool that lets users generate fully functional, interactive in‑game models such as drivable vehicles using natural‑language prompts, building on earlier tech that produced only static 3D objects and aiming to draw more developers to its platform.

Dubai‑based firm to invest $1.6 billion in Africa AI and farmland

Dubai‑based Maser Group, an electronics maker, plans a $1.6 billion investment in farmland and data centers across Nigeria, Ghana, and Kenya over the next 24 months, targeting Africa’s rising demand for digital infrastructure and food security while already having spent $300 million on land and related projects.

Tesla launches China AI training centre as self‑driving race accelerates

Tesla has opened an AI training centre in China to accelerate development of its Full Self‑Driving software using neural networks trained on real‑world driving video, amid intensified competition with Chinese automakers racing to roll out affordable Level 3 autonomous systems and despite regulatory barriers on cross‑border data flows.

Recommended Reads

Branching Out: 4 Git Workflows for Collaborating on ML

A clear, comparative analysis of four distinct branching philosophies and how each aligns (or misaligns) with the experimental, iterative reality of machine learning teams. Check it outPrincipled Git-based Workflow in Collaborative Data Science Projects

Thoughtful examination of how classic GitFlow concepts can be adapted at a philosophical level to support true collaboration in data science without sacrificing either creativity or stability. Check it outHow to Effectively Version Control Your Machine Learning Pipeline

Elegant framework that defines the four theoretical pillars every ML versioning strategy must address, with emphasis on why each pillar is non-negotiable for long-term success. Check it out

Trending AI Tool: lakeFS – “Git for your data lake”

At its core, lakeFS brings the theoretical power of Git branching, committing, merging, atomic rollbacks, and hooks directly to object storage without ever duplicating data. Branches are zero-copy, merges are instantaneous, and every change carries full provenance. It turns mutable data lakes into versioned, auditable assets that behave like code repositories, enabling safe experimentation at petabyte scale while preserving the immutability and traceability that modern ML demands. Organizations like Netflix and Arm rely on it precisely because it makes data as reliable and governable as software.

Learn more.

Follow Us:

LinkedIn | X (formerly Twitter) | Facebook | Instagram

Please like this edition and put up your thoughts in the comments.