Regularization in Logistic Regression: Lasso vs. Ridge

Edition #267 | 18 March 2026

Hello!

Welcome to today’s edition of Business Analytics Review!

We will dive into a topic that’s essential for building reliable models that actually perform in the real world: Regularization in Logistic Regression-Lasso vs. Ridge.

Overfitting is one of those sneaky problems we all run into sooner or later. Picture this: you’re working on a customer churn prediction model for a telecom company. You’ve got dozens of features call duration, plan type, complaints history, even device age and your logistic regression model nails the training data with near-perfect accuracy. But when you test it on fresh customer data? The performance drops off a cliff. That’s overfitting in action the model has memorized noise and quirks in the training set instead of learning the true underlying patterns.

Regularization is our go-to solution to fight this. It works by adding a penalty term to the loss function (the log-loss in logistic regression), discouraging overly complex models with huge coefficients. The two most popular techniques are Ridge (L2 regularization) and Lasso (L1 regularization). Let’s break them down conversationally.

L1 And L2 Regularization Explained & Practical How To Examples

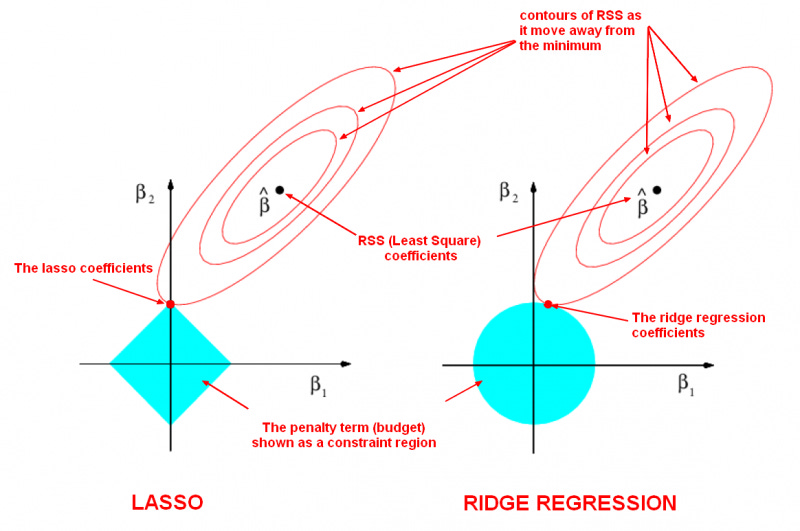

Ridge Regression (L2 penalty) adds the sum of the squared coefficients (multiplied by a tuning parameter λ) to the loss. This shrinks all coefficients toward zero but rarely sets them exactly to zero. It’s especially helpful when features are highly correlated (multicollinearity) common in business datasets with things like sales channels, marketing spend categories, or economic indicators. Ridge keeps all features but smooths out their influence, leading to a more stable model.

Lasso Regression (L1 penalty), on the other hand, uses the sum of the absolute values of coefficients. This can drive some coefficients all the way to zero, effectively performing automatic feature selection. Imagine you’re analyzing user behavior data for a SaaS product Lasso might zero out irrelevant or redundant signals (say, minor page views that don’t add predictive power) and give you a cleaner, more interpretable model. It’s a lifesaver in high-dimensional datasets where you want sparsity.

Shrinkage Methods: Ridge Vs. Lasso Regression – Data Science and Engineering Blog

In practice, the choice often comes down to your goals:

Use Ridge when you believe most features contribute something and you just want to reduce their impact.

Go for Lasso when feature selection matters think fraud detection, recommendation engines, or any scenario where simpler models are easier to explain to stakeholders.

Many practitioners now reach for Elastic Net (a hybrid of both) when they want the best of both worlds.

The beauty is that libraries like scikit-learn make it dead simple to experiment just switch the penalty parameter in LogisticRegression to ‘l1’ or ‘l2’ and tune λ (often called C as 1/λ) with cross-validation.

Recommended Reads

Penalized Logistic Regression Essentials in R: Ridge, Lasso and Elastic Net

A practical, code-heavy guide showing exactly how to implement and compare these techniques in logistic regression using R. Check it outLasso and Ridge Regularization - A Rescuer From Overfitting

An accessible explanation of how these methods tame overfitting, with real-world intuition and examples from linear models (principles apply directly to logistic regression). Check it outRidge Regression vs Lasso Regression

A clear breakdown of the mathematical and practical differences between L2 and L1 penalties, including when each shines in preventing overfitting. Check it out

Trending in AI and Data Science

Let’s catch up on some of the latest happenings in the world of AI and Data Science

Alibaba Debuts OpenClaw App to Feed China’s Agentic AI Addiction

Alibaba launched “JVS Claw,” a mobile app enabling iOS and Android users to quickly deploy OpenClaw AI agents for tasks like shopping and travel without coding. Free for 14 days, it intensifies competition with Baidu amid China’s agentic AI frenzy.Anthropic’s Claude AI can respond with charts, diagrams ...

Anthropic’s Claude now generates interactive charts, diagrams, and visuals directly in chats, like periodic tables or building weight distributions, when helpful. Users can request them; they evolve with conversations, unlike static artifacts.Anthropic, Google and OpenAI Bet Big on India

Anthropic, Google, OpenAI, and Microsoft are expanding in India with offices, investments, and infrastructure to tap its tech talent and AI market potential outside China. Anthropic plans a Bengaluru office to challenge leaders.

Tool of the Week: Desmos Graphing Calculator

Desmos Graphing Calculator (Visualization Tool) is a free web-based visualization tool that helps learners explore functions, equations, and data interactively. It builds intuition for concepts like regularization by enabling real-time graph plotting.

Learn more.

Follow Us:

LinkedIn | X (formerly Twitter) | Facebook | Instagram

Please like this edition and put up your thoughts in the comments.

Nice work.