Fixing Imbalanced Data Issues

Edition #181 | 27 August 2025

Apply for 30% Scholarship Here - Scholarship Form

Click Here to Explore More About the Program - Project Details

Hello!

Welcome to today's edition of Business Analytics Review!

Today about a topic that's tripped up many of us in the AI and ML world: fixing imbalanced data issues. You know, that sneaky problem where your dataset has way more examples of one class than another, throwing your models off balance. Whether you're building predictive systems for business decisions or optimizing operations, getting this right can make all the difference in delivering fair, accurate results. Let's break it down step by step, like we're grabbing coffee and geeking out over code.

Why Imbalanced Data Skews Model Accuracy

In machine learning, datasets often reflect real-world scenarios where events aren't evenly distributed-think of spam emails versus legitimate ones, or rare medical conditions amid common symptoms. When one class dominates (the majority class), models tend to prioritize it, achieving high overall accuracy by simply predicting the majority outcome most of the time. However, this skews results, leading to poor performance on the minority class, which is often the most critical. For instance, in fraud detection, if only 1% of transactions are fraudulent, a model might ignore them entirely, resulting in significant financial losses. Addressing this ensures fairness, as biased models can perpetuate inequalities, such as in hiring algorithms that overlook underrepresented groups. By rebalancing, we promote accuracy across all classes and build more ethical AI systems.

Strategies for Handling Imbalanced Datasets

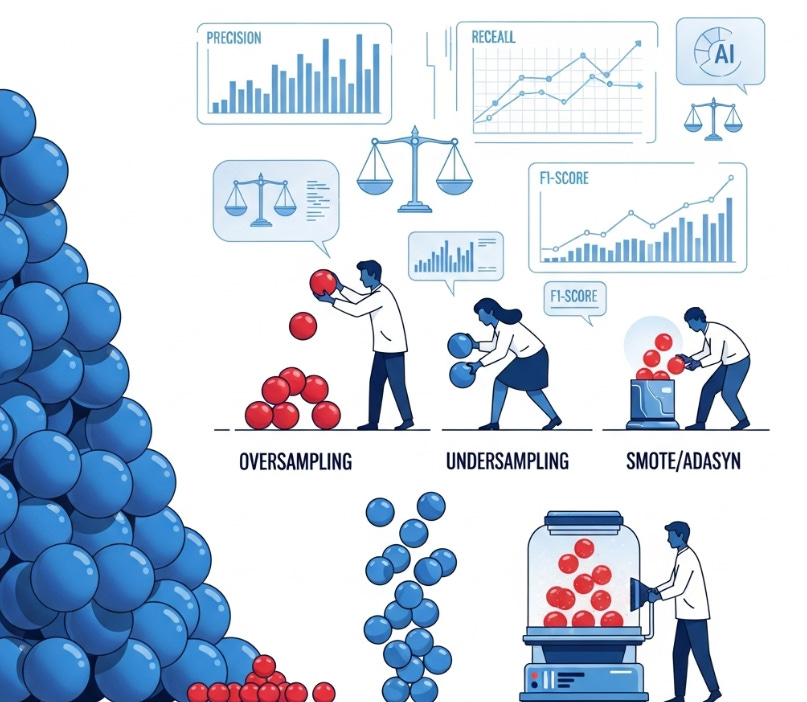

Several approaches can help, blending technical tweaks with practical insights. Resampling techniques adjust the dataset size: oversampling duplicates minority class examples (simple but risks overfitting), while undersampling removes majority class instances (efficient but may lose valuable data). Synthetic data generation, like SMOTE (Synthetic Minority Over-sampling Technique), creates new minority samples by interpolating between existing ones, adding diversity without exact copies. Other methods include adjusting class weights in algorithms to penalize misclassifications of the minority more heavily, or using ensembles like Random Forests that inherently handle imbalance better. In industry, teams often combine these-for example, a bank might use SMOTE during training to boost fraud detection rates, ensuring models are robust and fair.

Case Studies for Comprehension

To make this relatable, consider a credit card fraud detection system: In one study, applying SMOTE to a highly imbalanced dataset (with fraud at <1%) improved recall from 60% to over 90%, catching more fraudulent transactions without overwhelming alerts. In healthcare, models for rare disease prediction often use undersampling combined with class weighting; a review of medical datasets showed this reduced false negatives by 25%, aiding early diagnoses. These examples highlight how addressing imbalance not only boosts metrics but also delivers real business value, like cost savings or better patient outcomes.

In-Depth Exploration: Fixing Imbalanced Data Issues in AI and Machine Learning

Hello, fellow analytics enthusiasts! Welcome to today's edition of the Business Analytics Review, your daily dose of insights into the ever-evolving world of Artificial Intelligence and Machine Learning. I'm thrilled to dive into a topic that's close to the heart of many data practitioners: fixing imbalanced data issues. If you've ever built a model that nailed the easy predictions but flopped on the rare, crucial ones, you know exactly what I'm talking about. Today, we'll unpack why this happens, explore practical strategies like resampling and synthetic data generation, and discuss how these fixes promote fairness and accuracy. I'll weave in some real-world anecdotes and case studies to keep things grounded, plus recommendations for deeper reading and a trending AI tool to try out. Let's get started-grab your coffee, and let's make sense of those skewed datasets together.

Understanding the Problem: Why Imbalanced Data Skews Everything

Picture this: You're training a model to detect defective products on an assembly line. Out of 10,000 items, only 50 are faulty-that's a classic imbalanced dataset, where the majority class (good products) dwarfs the minority (defects). In such cases, a naive model might just predict "good" every time and boast 99.5% accuracy. Sounds great, right? But it's misleading. The model hasn't learned to spot defects; it's just riding the wave of the dominant class. This skew happens because most algorithms optimize for overall error reduction, naturally favoring the majority. The result? Poor recall for minorities, meaning critical misses-like failing to flag a safety issue that could lead to recalls and lawsuits.

Beyond accuracy, there's a fairness angle. Imbalanced data can amplify biases; for example, in loan approval systems, if historical data underrepresents certain demographics, the model might unfairly deny applications, perpetuating inequality. In industries like healthcare or finance, this isn't just a technical glitch-it's an ethical concern. Research suggests that unchecked imbalance leads to models that are unreliable in high-stakes scenarios, where the minority class often represents the "signal" we care about most, like rare diseases or fraudulent transactions. To address it, we shift focus to metrics like precision (how many flagged items are truly positive?), recall (how many actual positives did we catch?), and the F1-score (a balance of both). These give a truer picture, ensuring our models aren't just accurate on paper but effective in practice.

Core Strategies: Resampling and Beyond

Fixing class imbalance starts with resampling: oversampling duplicates minority cases (risking overfitting), while undersampling cuts majority samples (risking information loss). Smarter methods like Tomek links and NearMiss refine decision boundaries. Synthetic data generation, such as SMOTE and ADASYN, creates new minority samples to boost variety, particularly useful in sensitive domains like healthcare. Algorithm-level solutions include class weighting, Balanced Random Forests, and boosting methods like XGBoost. Often, combining resampling with algorithmic tweaks delivers the best results. Iteration is essential: validate with cross-validation, monitor fairness with robust metrics, and continuously refine to adapt to evolving, dynamic datasets.

Ensuring Fairness and Accuracy: A Holistic View

Fixing imbalance isn't just about better scores-it's about building trustworthy AI. Fairness means models don't discriminate; for example, resampling can help equalize error rates across groups, but we must watch for over-correction that might favor minorities unfairly. Accuracy improves as we capture nuanced patterns, leading to real ROI-like reduced false positives in security systems, saving time and resources. Industry insights show that companies investing in these strategies see 20-30% better predictive power in imbalanced scenarios. An anecdote from a fintech friend: Their team ignored imbalance initially, leading to a model that missed 40% of frauds. After implementing SMOTE and weighted loss, detection soared, cutting losses by millions. It's a reminder that in AI, balance isn't optional-it's essential for sustainable success.

Case Studies: Real-World Applications for Better Grasp

To bring this home, let's look at three compelling case studies that illustrate these concepts in action.

Credit Card Fraud Detection: In a classic example using the Kaggle Credit Card Fraud dataset, where frauds make up just 0.17% of transactions, researchers applied SMOTE to oversample fraud cases synthetically. This boosted the model's F1-score from 0.75 to 0.92, dramatically improving recall without sacrificing precision. The anecdote here? A bank implementing similar tactics reduced annual fraud losses by 25%, turning a data headache into a competitive edge. It shows how synthetic generation shines in high-imbalance financial settings, where real fraud data is scarce.

Medical Diagnosis for Rare Diseases: A decade-long review of imbalanced medical datasets highlighted a study on breast cancer prediction, where malignant cases were vastly outnumbered. Using undersampling combined with ensemble methods like AdaBoost, the team achieved a 15-20% drop in false negatives, aiding earlier interventions. In practice, hospitals adopting these saw improved patient outcomes, with one case noting fewer misdiagnoses in underrepresented ethnic groups. This underscores the ethical imperative: In healthcare, imbalance fixes aren't just technical-they save lives by ensuring fairness across demographics.

Insurance Claims Prediction: Facing a dataset with only 5% high-risk claims, an insurance firm used a mix of random undersampling and class weighting in their XGBoost model. A case study revealed this approach lifted minority class precision by 30%, enabling better risk assessment and premium pricing. The relatable twist: What started as a "why are our predictions so off?" frustration turned into a streamlined claims process, reducing processing time and customer complaints. It's a prime example of how resampling adapts to business needs, blending tech with tangible results.

Recommended Reads

10 Techniques to Handle Imbalance Class in Machine Learning

This piece breaks down a variety of methods, from basic resampling to advanced ensembles, with code snippets for hands-on learning.5 Effective Ways to Handle Imbalanced Data in Machine Learning

Focused on practical implementation, it covers resampling, metric shifts, and real-code examples to get you started quickly.Handling Imbalanced Data for Classification

A thorough guide on techniques like synthetic generation and specialized algorithms, ideal for classification pros.

Trending in AI and Data Science

Let’s catch up on some of the latest happenings in the world of AI and Data Science

Thai Data Centers Eye AI Boom

Thailand's data center capacity may surge, possibly tripling as demand for AI infrastructure grows. Investment and expansion by industry players reflect the evolving technology landscape.Google’s AI Mode Gets Global Boost

Google expands AI Mode to 180 countries, adding personalized and agentic features for users. These updates enhance search, dining reservations, and collaborative options.AI Smart Glasses Enter The Scene

Two Harvard dropouts launch “always-on” AI smart glasses that record and transcribe conversations, giving wearers real-time info. Privacy debates and legal concerns follow.

Trending AI Tool: imbalanced-learn

Wrapping up, I'd recommend checking out imbalanced-learn, a handy Python library that's gaining traction in 2025 for tackling data imbalances head-on. It extends scikit-learn with tools like SMOTE, ADASYN, and advanced undersamplers, making it easy to integrate into your workflows.

Learn more.

Apply for 30% Scholarship Here - Scholarship Form

Click Here to Explore More About the Program - Project Details